Feeling overwhelmed trying to read research papers? I know I have. You can learn to quickly extract key findings and glossary terms using free AI tools like Anthropic’s Claude. This guide shows you how to use Claude to understand complex research, regardless of your experience level.

Knowledge You’ll Gain:

- Get Acquainted with AI-Powered Research: Discover how to use Anthropic’s Claude, a free AI tool, to navigate and understand research papers.

- Extract Key Insights Quickly: Learn how prompts can get Claude to summarize important findings.

- Decode Technical Jargon: Find out how to create a custom glossary of terms.

- Uncover Author Backgrounds: Use Claude to investigate author expertise, publication history, and potential research biases.

- Apply Actionable Takeaways: Learn to identify and extract actionable insights to apply to your work or projects.

Why Use Claude (Free Version)

In this article, I’m focusing on using Claude from Anthropic. It’s an artificial intelligence chatbot similar to ChatGPT but offers some distinct advantages with the free version. Namely, I can upload a PDF file. It also offers a large token size which means it can handle more data. Like the free version of ChatGPT, you may hit a threshold where you have to wait before progressing.

The prompts and questions I’ve listed here are designed to get you started so you can build your own framework. While some of these questions could be considered universal, you’ll want to adjust based on the subject matter and goal. Also, most of the images are partial screen snaps to give an idea of the type of answers Claude returns.

Getting Started

Before submitting a research paper to Claude, I like to give it a first look. For example, I like to know:

- how is the paper laid out?

- how long is it?

- is the paper machine-readable?

- what’s the last section

Some research papers are nicely laid out and they are easy to read. However, I’ve seen some, where the PDF pages were images and the AI tools couldn’t extract the data. Two sources I go to for research papers are arVix and Google Scholar. I also appreciate that I can often download the papers as a PDF file.

One way to test a PDF file is to try to highlight text with your mouse. If you can’t highlight the text, these systems will have a harder time understanding the content. These systems aren’t fully “multi-modal” yet where they can interpret text, videos, images, and audio.

New to Claude?

Claude is a conversational AI tool. The tool is available in both a free and paid plan. Once you create an account, you can converse with it in a question-and-answer format. Your questions are called “prompts”.

- [A] Indicates my plan type

- [B] Main prompt area

- [C] Shows the AI model and version

- [D] Link to upload image files or documents

- [E] Some sample prompts

- [F] Artifacts experimental feature

- [G] Your recent chats

- [H] Access to navigation pane with recent and starred chats

Give Claude Context for Better Results

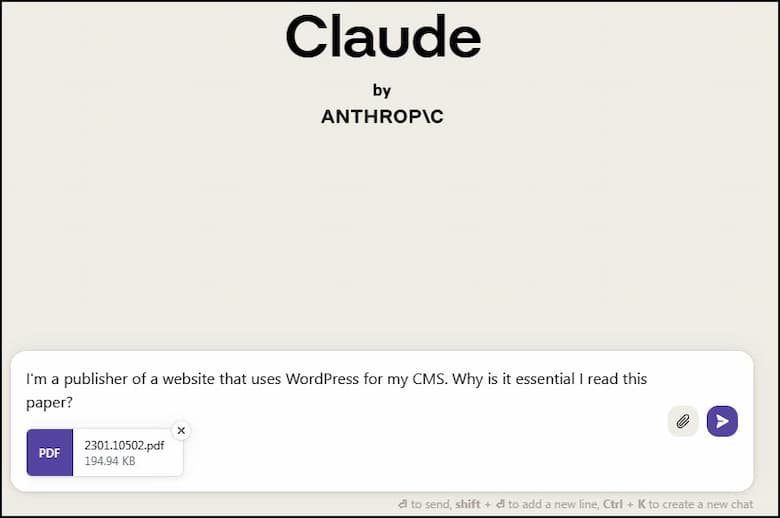

When you converse with an AI tool it helps to give it context. These tools will use that information when providing answers to your questions. In the example below, I’m reading a PDF research paper on protecting content management systems.

Unless I state my role, Claude doesn’t know how the information applies to me or why I’m interested. But by providing this context, I can help Claude AI frame a better answer for me. If I don’t provide this context and just submit the file, Claude will give me a summary.

Determine if the Research is Worth Your Time

If you look at my screen snap above, you’ll see I also asked Claude, “why is it essential I read this paper“? I’m trying to be efficient here. I borrowed the idea from Blinkist, which I previously reviewed. They have a book summary section called, “What’s in it for me?“

If Claude comes back and the answer doesn’t serve my needs, I’m probably going to find another research paper. Hopefully, you can see how adding my role shapes Claude’s response.

Based on the response, I want to continue my questioning.

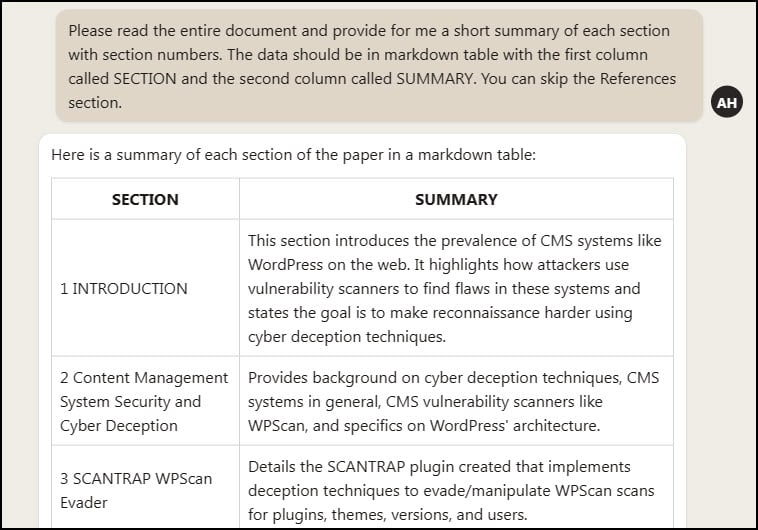

Create a Table of Key Takeaways

This is a step I added for a couple of reasons. The first was I noticed sometimes these AI systems wouldn’t provide info on the whole document. This isn’t specific to Claude. That’s one reason I scan the PDF document before uploading. They might skip sections at the end. The second reason is I want to get a better idea of the terminology.

I also requested Claude to return the data in a table format. This is a personal preference and you may prefer not having tables or columns. Again, I’m trying to get the gist of the document.

Now, if my table comes up short based on my earlier read, I know I have an issue. In my case, I did get all the sections.

Refine Your Prompts

Sometimes a prompt won’t work the way you want. In these cases, once I get the results I want, I ask the AI tool to help me rephrase the prompt. In the example below, I was having ChatGPT give me what I was looking for. That’s when I asked it for help.

You can use this approach to refine your prompts as well. For example, if you had a different exercise where you asked the tool to create 5 writing samples and there were 2 you liked. You could ask the tool, “Please refine my prompt to give me results like items #3 and #5.”

As good as these tools are, they sometimes make things up when they don’t have an answer for your prompt. If something isn’t correct or seems off, ask the tool. After all, if you were having a conversation with a friend and something seemed wrong, you would probably say, “Are you sure about that?”.

Another example is if you’re trying to compare findings from multiple research documents. Sometimes, you’re reading one study, but a finding in Paper A contradicts something in Paper B. Ask Claude to compare and contrast the findings.

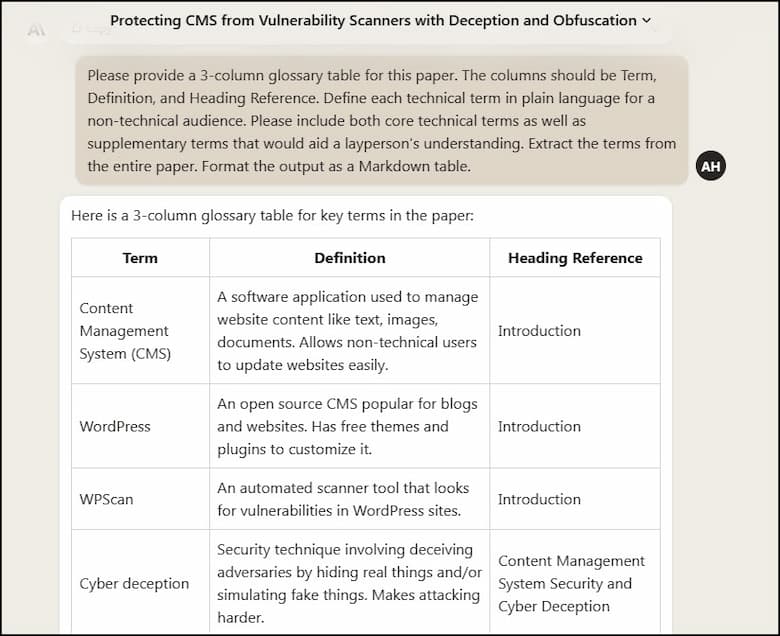

Build a Glossary Table

I’ve never read a research paper where there were terms I didn’t fully understand. Sometimes, you can gather the meaning from the surrounding sentences. Other times, you can’t. Regardless, I find my comprehension increases if I review glossary terms.

Again, I’ve asked Claude AI to read the entire document and provide the Heading Reference for the term. And even though I previously told Claude I was a website publisher, I want the definition in non-technical terms.

Investigate Author Expertise & Background

I think it’s important to know about the paper’s authors, but I’m not fond of how most papers display them. Instead, I prefer to prompt the system for information.

Related Questions:

- Can you provide links to any other publications the authors may have written?

- What research methodology was used?

- How was this research funded?

Uncover Key Research Findings

Now that we have a good idea of the terminology and outline, I like to ask about key findings. More often than not, these items lead me to additional questions and clarification. For example, I wasn’t familiar with either WPScan or SCANTRAP.

Extract Key Statistics and Data

Like key findings, stats are one of those items that people like to focus on. And sometimes they aren’t referenced in key findings so I like to pull them out. As you can see, some of the items aren’t statistical in nature but offer hints. For example, look at “most popular” and “fully”.

Setting Boundaries and Controlling the AI

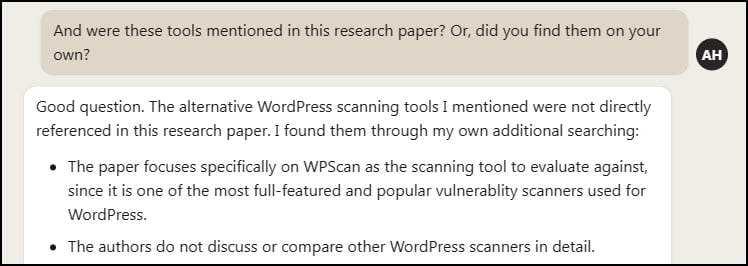

Now this one relates back to why I read the PDF before starting my session. When doing a follow-up question, Claude presented some info I hadn’t seen when I scanned the initial doc. As you’ll see from my prompt, Claude did indicate it found those tools by doing its own research. This isn’t bad per se, as sometimes you want more info. You may recall from the earlier bio section, I asked what other papers the authors had published.

Based on your research, you may want to have Claude stick to just the document. All you need to do is tell Claude to stick with this PDF and not go elsewhere unless you explicitly provide instructions.

Turn Research into Actionable Steps

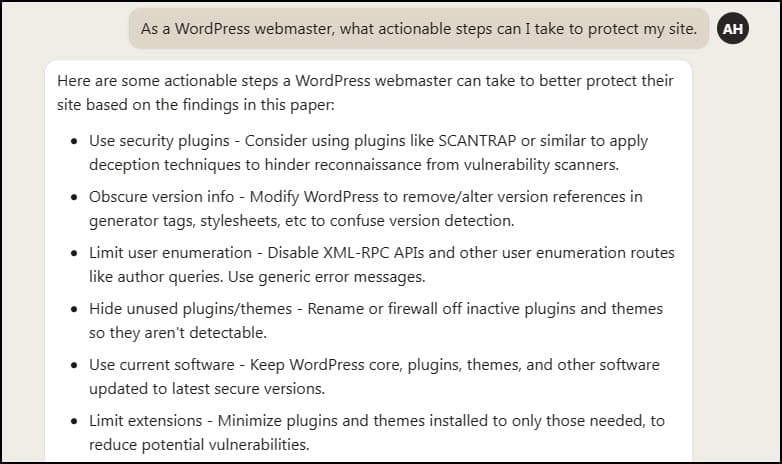

This is probably an optional prompt for many depending on the subject. In my case, I was looking for actions I could take. This time, I slightly altered my role and said I was the webmaster.

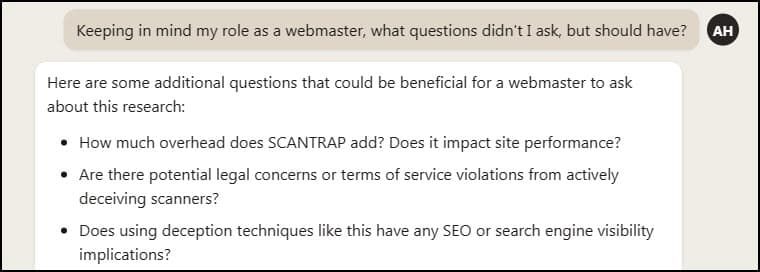

Discover Hidden Insights You Might Have Missed

This is one of my favorite prompts. It reminds me of the Rumsfeld Matrix and “unknowns” and “knowns”. This is where I often find things that weren’t on my radar.

Always Be Testing

The field of AI chatbots is continually changing with new players and features. To keep abreast of the tools and their results, you can use the free crowdsourced comparison tool called Chatbot Arena. It allows you to compare various AI models and see side-by-side results. While you won’t have all the features such as extensions, you can paste text in for analysis.

Also keep in mind that the questions you ask today may provide different answers later. These systems are always improving their models and interfaces.

Ready to experience a more efficient way to read research papers? Find an interesting article, open Claude, and put the above steps to work. You’ll be surprised how much faster you can uncover the key points. And if you want to take it to the next level, try using Google NotebookLM to research multiple papers.